Whether Google penalizes AI content is the single most-asked SEO question of the last three years. The short answer is no, Google does not penalize content for being written with AI. The longer answer is more useful, because what Google does penalize, AI made dramatically easier to produce.

This article is the honest version: what Google has actually said, what the public data shows, what actually gets penalized, and a five-step workflow for using AI in SEO content without getting hit. We've tested this across thousands of articles on our own sites, on client sites we ran during our agency days, and now across the customer base running on Soro. What's below is what's stayed true through three years of algorithm updates.

The short answer

Google does not penalize content for being written or assisted by AI. It evaluates content the same way it always has: by whether the content helps the searcher, demonstrates real experience and expertise, and delivers something a person can actually use.

Google said this themselves in February 2023, and they've reiterated the position in every helpful-content update since. The clearest signal is that Google itself now generates AI Overviews and AI Mode results at the top of the SERP. A search engine that punished AI-generated content while putting AI-generated content at the top of its own results would be a contradiction.

What does get penalized is the same thing that's always been penalized: thin pages built to rank, content that doesn't match search intent, and sites publishing volume without quality. AI made that kind of content cheaper, faster, and easier to produce. Penalties are about the output, not the tool.

What the data actually shows

The most useful study on this came out of Ahrefs in 2025. They analyzed 600,000 top-ranking pages across 100,000 keywords and ran every page through an AI content detector.

The numbers:

- 86.5% of top-ranking pages contained some AI-generated content

- Only 13.5% were classified as fully human-written

- 4.6% were classified as pure AI (no human input detected)

- The correlation between AI usage and ranking position was 0.011, which is statistically indistinguishable from zero

If Google were actively suppressing AI content, you would not see this distribution. You would see a strong negative correlation between AI percentage and ranking position. You would see almost no pure-AI pages in the top 20. Neither happens.

The one minor signal Ahrefs did find is that the very top result (position #1) tends to have slightly less AI content on average than positions 2 through 20. The effect is small. It's also consistent with our own experience: pure AI generation can get to the top 10 without much trouble, but holding position 1 against editorial-quality competitors usually takes a human pass for originality and voice.

What we saw firsthand across 50,000+ articles

The pattern below is drawn from sites we ran ourselves, sites we ran for clients during our agency years, and a cohort of Soro customers who opted into a long-running experiment on AI content and ranking outcomes. Combined, the dataset is more than 50,000 published AI-assisted articles across 2,000 sites that signed up for the experiment. The data spans late 2023 through April 2026 and covers roughly twenty industries, mostly English-language SMB and SaaS sites in the Domain Rating 10–60 range. We tracked rankings in Google Search Console and Ahrefs Rank Tracker, audited content quarterly, and shipped through every named Google ranking update in that window: the March 2024 core update (which folded the helpful content system into the main ranking system), the August 2024 core update, the November 2024 core update, the March 2025 core update, the June 2025 core update, the December 2025 core update, and the March 2026 core update that wrapped up days before this article was published.

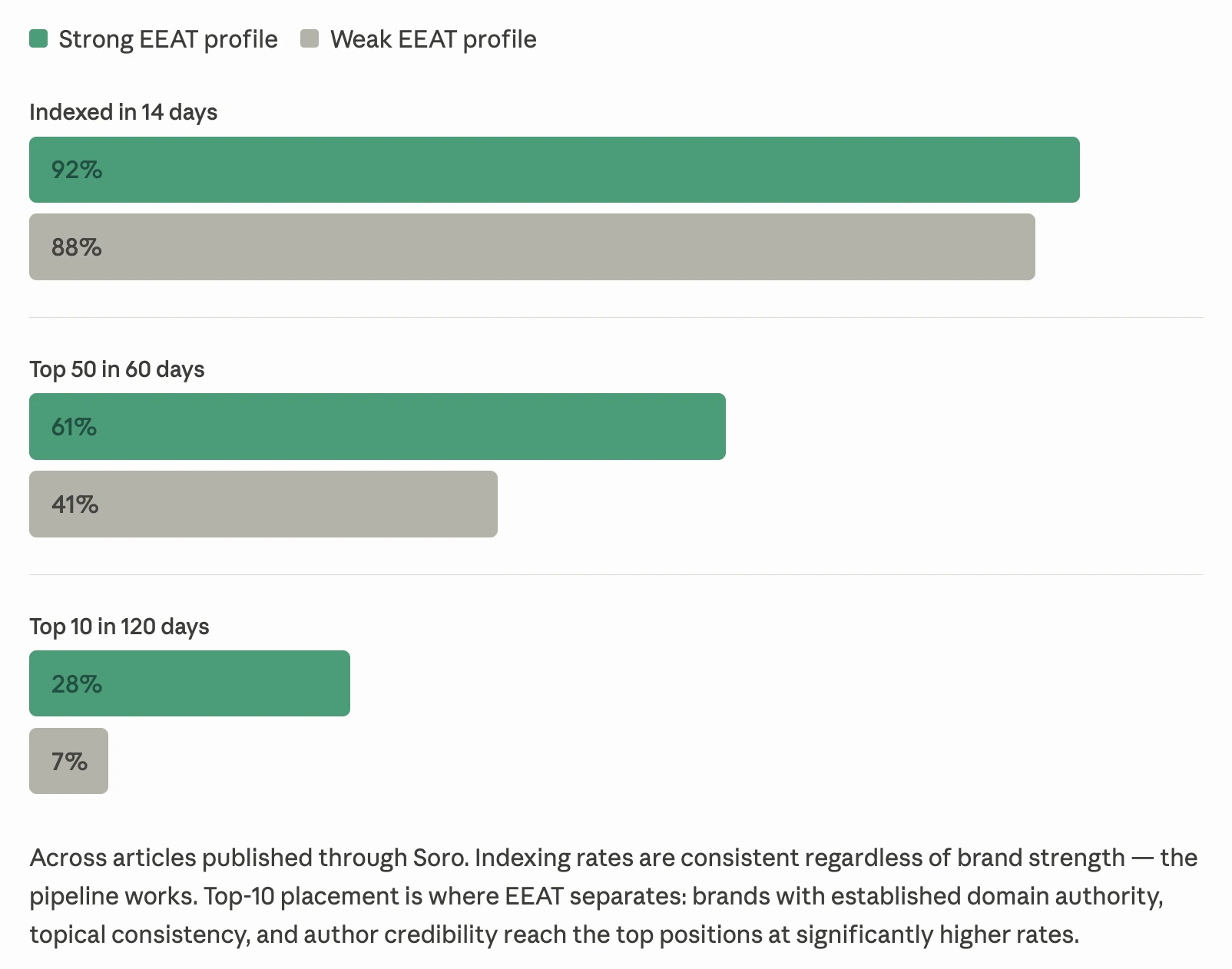

We tracked every article through three checkpoints: did it index within 14 days, did it reach the top 50 within 60 days, did it crack the top 10 within 120 days. To isolate the variable that mattered most, we sliced the cohort by EEAT strength — sites with established domain authority, consistent topical focus, real author bylines, and a record of useful content on one side; weaker brand profiles on the other.

The funnel converges at the front of the pipeline. Indexing rates are nearly identical, comfortably above 90% for both groups. The gap opens at top-50 (61% vs 41%). It opens dramatically at top-10 (28% vs 7%). Indexing is a pipeline question, and the pipeline works regardless of brand strength. Top-10 placement is an EEAT question, and EEAT decides who gets there.

Strong-EEAT brands reached the top 10 at 4× the rate of weak-EEAT brands. Indexing was roughly equal. The gap is entirely on the EEAT side.

The variable that explained most of the top-10 variance was not whether AI was used. It was the editorial discipline that defines a strong EEAT profile in the first place: a real point of view, original detail, fact verification, and author credibility. The same things Google has told site owners to do since 2011, applied to a faster production tool.

Three patterns showed up underneath that gap, across keyword brackets, industries, and update cycles.

Pure prompt-and-publish content rarely ranked well. Articles produced from a prompt like "write a 1,500-word post about [keyword]" and shipped without editing usually indexed fine and got nowhere. Not penalized in the technical sense. Just invisible. Generic, surface-level, no point of view, no original detail. These were the pages the helpful content system (HCS) was designed to filter. After the March 2024 core update folded HCS into the main ranking system, the floor on this kind of content moved further down.

AI-assisted content edited for voice and originality performed about the same as fully human content. Articles where the writer used AI for the first draft, then spent 30–90 minutes adding a real point of view, original examples, internal data, and tone adjustments, ranked indistinguishably from fully human-written articles over the same period. Sometimes better, because the same writer could ship two to three times more of them.

Pages that did get hit during 2025's helpful-content cycles were almost never AI-only. When we audited the rank-loss cohort line by line, the common factor was not the production method. The common factor was that a reader landing on the page would feel their time wasn't well spent. AI status was orthogonal. Some of the worst pages were entirely human-written from 2021–2022. Some of the best ones were AI-assisted in 2025.

That last pattern is the most counterintuitive and the most important one. Google didn't punish AI use during 2025. Google punished low-effort content. AI was simply the most popular production method for low-effort content in that window, which made the correlation easy to mistake for causation.

A note on methodology. We can't isolate AI involvement from every other variable in a controlled way. We don't have a randomized control group, and we wouldn't trust anyone who claimed to. What we have is two and a half years of ranking data across 2,000 sites in the experiment cohort, seven named Google ranking updates between March 2024 and March 2026, and a set of patterns that held consistently through every one of them. Not peer-reviewed science. Operationally valid for SEO decisions, which is what this article is about.

What actually gets penalized

A useful exercise: read Google's helpful content system documentation and notice what's not on the list. "Was this written by AI" is nowhere. "Did this help the searcher" is the entire spine.

The patterns that consistently get downranked, with or without AI involvement:

Content that doesn't match what the searcher wanted. A page targeting "how to fix a leaky faucet" that opens with three paragraphs of plumbing history is mismatched. Doesn't matter who wrote it.

Content with no original point of view. If your article on "best CRM for small business" reads like the average of every other CRM listicle on page one, you're describing the SERP back to itself. Google increasingly recognises that pattern and downranks it.

Pages built primarily to rank. Programmatically generated city-by-city pages with the same content swapped between locations. Auto-spun comparison pages with no real comparison. Tag pages with a paragraph of filler. AI made these easier. They were already getting penalized before AI.

Fabricated facts. AI hallucinations are a real risk. We've seen articles cite statistics that don't exist, attribute quotes to people who never said them, and reference studies that aren't real. That's not an AI penalty. That's an "incorrect information that hurts users" problem, and Google's algorithms are increasingly good at flagging it through cross-referencing trusted sources.

Sites publishing volume without quality. A new site shipping 50 articles a week with no editorial filter triggers a different concern: the helpful content system can apply site-wide signals. One bad page doesn't tank your domain. Two hundred bad pages in three months can.

The throughline: every single one of these patterns existed before AI. AI made them faster to produce. The penalties are about what the page is, not how it was made.

How to use AI for content without getting hit

The version of this question that actually matters is practical: what does the workflow look like? Here's the version we converged on after more than two years of testing, in order of importance.

Write to the search intent, not the keyword. Before generating anything, look at the top 5 results for the target keyword. What is the searcher actually trying to do? An AI model will write what the average article on the topic says, which is fine for entry-level pages and inadequate for anything competitive. Brief the model on the specific intent before you start.

Add something the model can't have. Internal data, real examples, opinions, original frameworks, screenshots from your own product, interview quotes from your team, photos of work you've actually done. The articles that ranked best had at least one element no general AI could have produced. A page that's 100% reproducible from a prompt is a page that adds nothing to the SERP.

Edit aggressively for voice. Generic AI prose has tells: too many three-item lists, too many "moreover" transitions, hedge phrases like "it's important to note." Cut them. Read the article out loud. Rewrite anything that doesn't sound like a person from your team would have said it.

Fact-check anything specific. Numbers, dates, attributions, study citations, product features. AI models invent these confidently. We have a hard rule: any specific claim gets a source verification before publish. That single rule cut our error rate on AI-assisted articles to near-zero.

Cap volume to what your editorial process can review. A small team using AI to ship 20 high-quality articles a month is a competitive advantage. The same team using AI to ship 200 unreviewed articles a month is asking for a helpful-content downranking. The bottleneck should be human review, not generation.

That's the playbook in five lines. None of it is dramatic. All of it is the same kind of editorial discipline that always separated good content from bad, applied to a faster production tool.

What to do if you're worried about content you've already published

If you've already shipped a lot of AI-assisted content and you're wondering whether you've quietly damaged your site, this is the audit we'd run. It takes about an hour for a small site, longer if you've been publishing aggressively.

- Pull a list of every page on your site that's getting fewer than 10 organic visits per month (Search Console works for this). These are the candidates. High-traffic pages that are working don't need attention.

- Sort that list by impressions. Pages with high impressions and low clicks are the easiest to fix; usually a title, meta, or intro rewrite. Pages with low impressions and low clicks need a deeper look.

- Read the lowest-performing pages as a stranger would. If the article doesn't answer the searcher's question in the first two paragraphs, doesn't add anything beyond what the SERP already says, or feels like it could have been written about any topic with the words swapped, it's a refresh candidate.

- Refresh, don't delete. Add a real point of view, original examples, an updated date, and one element a model couldn't have produced (a screenshot, a chart, a quote, a personal observation). Keep the URL. Update the lastmod in your sitemap.

- Cap incoming volume to what your editorial process can review. This is the rule that prevents the next round of audits.

You don't need to disavow anything. You don't need to add an "AI disclosure" banner. You don't need to noindex pages unless they're actually duplicate or thin. The audit is about quality, not about anything specific to AI.

Why we built Soro around this

This is the same pattern we see working every day with the people using Soro now. Content built around the customer's real knowledge of their business, written to genuinely help the searcher, fully inside Google's E-E-A-T criteria, and not, in Google's own spam-policy language, "made primarily to manipulate ranking in search results." That's the line. Sites that stay on the helpful side of it keep ranking. Sites that drift across it get filtered, regardless of who or what produced the content. We work with this mindset daily, and the customer outcomes are the evidence the framework holds up.

Soro automates the full content pipeline, not just the drafting step that most "AI SEO tools" stop at. Keyword research, brief generation, brand-voice drafting, fact-checking, original-element insertion, on-page optimization, internal linking, CMS publishing. The drafting piece turned out to be the easiest part once everything around it was solved, and it's the only piece most products automate. The point of the product was never "AI content." The point is content that ranks, produced by AI plus the editorial layer that makes AI content actually rank.

Frequently asked questions

Can Google detect AI content?

Google has never publicly confirmed they detect AI-generated content. Independent AI detectors exist but are imperfect, and even if Google ran one internally, the Ahrefs data shows they don't act on its output. The closest practical signal is the helpful content system, which evaluates the page itself, not the production method.

Will AI content rankings tank in the next algorithm update?

Three years and seven named ranking updates later - most recently the March 2026 core update - sites running AI-assisted content with editorial review continue to rank. Sites running unedited AI volume have suffered, in the same way sites running unedited human-written volume suffered before that. The variable is editorial process, not the algorithm. That's why Soro runs a proprietary model that layers brand DNA on top of multiple revision passes - so AI-assisted drafts get the kind of editorial review that used to take a human team.

Can AI content earn backlinks?

Backlinks come from useful content. If the AI-assisted article delivers a useful asset (data, an opinion, an original framework, a tool), links follow. The asset matters. The production method is invisible to the linker.

Can AI content build topical authority?

Authority comes from consistent publishing on a defined topic, real expertise demonstrated in the work, and external validation. AI can accelerate the publishing part significantly. The other two are still on you.

Is it better to disclose that an article was written with AI?

Google does not require AI disclosure and does not factor disclosure into ranking. Some publishers disclose for transparency reasons. From a pure SEO point of view, disclosure is neutral. The page either helps the searcher or it doesn't.

Bottom line

The penalty fear is the wrong question. The right question is whether your AI workflow includes the parts a model can't do on its own: a real editorial pass, original first-hand input, fact verification, and a volume cap that matches your review capacity.

If those four things are in your process, you're fine. The pattern holds across every named Google ranking update from the March 2024 helpful-content fold-in through the March 2026 core update. If they aren't, the algorithm update isn't what's going to hit you. Indifference will. Pages that don't help anyone don't rank, no matter who or what wrote them.

Most of the people who asked us this question in 2023 ended up using AI-assisted content within a year. None of them got penalized. Most of them shipped more than they ever had before. The ones who shipped the most without losing rankings were the ones who treated AI as a faster first draft, not a finished article.

Further reading:

- Auto SEO Software: Full Autopilot for 2026? — How automated content production actually works in practice

- GEO vs SEO: What's the Real Difference in 2026 — Optimizing for AI search alongside Google

- Is SEO Dead in 2026? — The honest take on SEO in the AI search era

- Writing Content That Ranks — The editorial layer that makes AI content perform